The Ethics of Black Mirror: Nosedive

Evaluating the UX of the system that turns social media into a sovereign authority

System Overview

Citizens have a mandatory eye implant that allows them to rate every social interaction and post from one to five stars, directly impacting their lifestyle, socioeconomic status, and well-being based on how high or low they are rated. Citizens who are rated zero are stripped of the implant, jailed, and banished from society.

ViolatesHuman Agency

System Feature: Ratings First, the computational system may undermine the human user's sense of moral agency. In such systems, users are placed into mechanical roles, often with little understanding of the larger purpose of their actions. — Friedman & Kahn (1992)

“

Image Credit: Gemma Kingsley (Motion Graphics Art Director)

Human meaning and value are based on the system’s rating, which determines behavior and treatment, yet users seem detached from the fact that they are ultimately the ones in control of these ratings.

A positive rating does not signify a meaningful relationship between two people; it is transactional, and they remain ignorant of that person’s beliefs, personality, morals, ideals–all the things that make them human.

Image Credit: Doctor Psycho Podcast

Similarly to how Searle manipulates Chinese symbols without meaning or understanding of Chinese, the people using the system in Nosedive manipulate the stars to rate each other without care or understanding of the people they rate.

When offered the opportunity to see the protagonist (Lacie )'s emotional outburst as a fellow human experiencing mental distress, they stick to the system procedure. They have projected a moral agency onto the system, acting as if it is the system’s decision.

In reality, humans have chosen to abandon empathy. They are mechanical, detached, and unaware of the effects the punishment will have on Lacie’s psyche, and evade accountability through their system usage.

Upholds

Trust

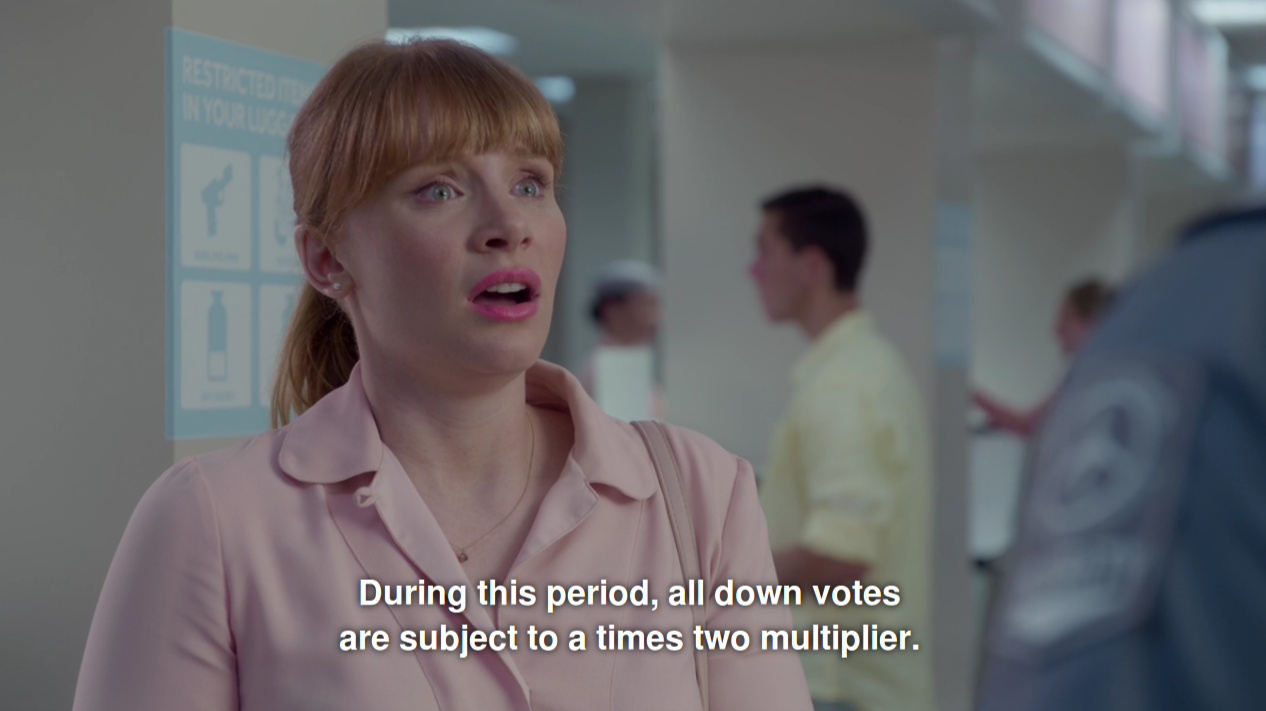

System Feature: Double Damage

“Trust exists between people who can experience goodwill, feel vulnerable, and experience betrayal (Baier, 1986; Friedman, Kahn, & Howe, 2000; Kahn & Turiel, 1988). On a societal level, trust enhances social capital.” – Friedman & Kahn (2003)

Image Credit: Gemma Kingsley (Motion Graphics Art Director)

Ratings are currency, affect social capital, leave users vulnerable, and cause them to experience betrayal several times throughout their system usage. Friedman & Kahn (2003) discuss how violations of trust affect us psychologically, leaving us vulnerable, hurt, and embarrassed. Double damage means any low rating counts twice as much; this results in Lacie feeling betrayed by the system (and its users) and pushes her closer to her breaking point with each bad review.

In other cases, double damage may cause citizens to work harder to regain goodwill or earn exclusive perks (luxurious homes, better healthcare, popularity, etc.). There is a high level of trust with the system, to the point where society reinvents itself around the technology and its users pedestalize its invention.

Image Credit: Netflix

ViolatesAutonomy

System Feature: Holographic Ads“Autonomy is protected when users are given control over the right things at the right time. The hard work is deciding what those features are and when those conditions occur.” — Friedman & Kahn (2003)

Image Credit: Netflix

Advertisements are used to influence users’ goals and expectations, but this system misrepresentation undermines autonomy. By preying on their deepest desires and presenting an ideal life Lacie craves, it presents a condition that requires further submission to the system and abandonment of control only after she expresses her decision to commit to the home. As a result:

Lacie’s decisions become more erratic in a desperate attempt to achieve the unrealistic goals and expectations presented to her in the hyperbolic advertisement.

The advertisement places more power and dependence on the system while diminishing Lacie’s autonomy.

The system offers no mechanisms for Lacie to express her frustrations, control the conditions, receive support, or for the system to adapt to Lacie’s goals.

ViolatesIdentity

System Feature: Singular Accounts“Too much multiplicity can lead to schizophrenia; too much unity can stifle psychological growth and impair social functioning (e.g., it's impractical to present the same “persona” to one's boss and child).” — Friedman & Kahn (2003)

Image Credit: Gemma Kingsley (Motion Graphics Art Director)

Users cannot present themselves as one way to a friend, and another to a co-worker. This unification of personas results in emotional repression, psychological decline, and banishment from social gatherings or society at large.

For example, Chester’s (Lacie’s coworker) breakup is no longer a personal matter he can choose to separate from his work life, but a chance for the office to publicly humiliate and collectively shun him.

Once he is a pariah, he cannot create a new account and start over. He is stripped of the technology, which directly strips him of his identity.

Users can not fine-tune their audience, which creates a lack of diversification in groups and interactions. This singular identity is required to integrate well with a singular group, and the system never allows exploration of other communities.

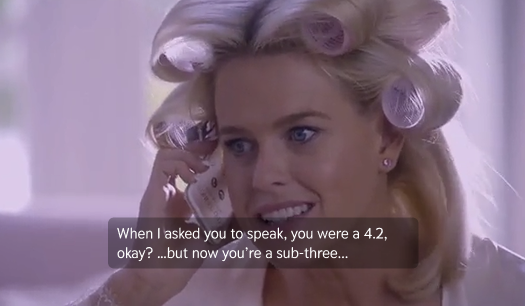

ViolatesHuman Welfare

System Feature: “Friends”“Psychological welfare involves higher-order emotional states, including comfort, peace, and mental health. [Web connectivity] benefits include enhanced friendships; harms include new betrayal forms (e.g., a chat room “friend” turning out to be a bot).” — Friedman & Kahn (2003)

The friendship feature causes harm to mental health and well-being. “Friends” are made out of survival and fear, rather than comfort or compatibility. The anxiety around being excluded drives usage, diminishing well-being with each failed or broken connection and turning the feature into a coerced transaction.

A welfare-based friendship feature considers Friedman & Kahn’s emphasis on enhancing bonds and comfort with minimal harm; the current basis exacerbates harm by misguiding user expectations, exploiting their goals, and causing mental distress.

The feature encourages fragile and conditional relationships that result in psychological breakdowns when the user's expectation does not align with the system’s portrayal.

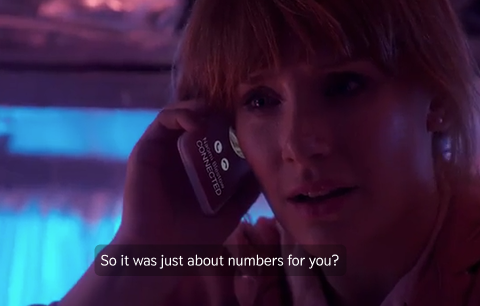

When Lacie is confronted with the reality that these “friends” are meaningless in terms of authenticity and depth, her trust is violated, and she is psychologically wounded, embarrassed, and dejected.

Image Credit: Netflix

ViolatesPrivacy

System Feature: Public Accounts“Privacy refers to an individual's claim, entitlement, or right to determine what information about themselves can be communicated to others.” — Schoeman (1984)

Image Credit: Gemma Kingsley (Motion Graphics Art Director)

Users cannot privatize their accounts. Friedman & Kahn (2003) explain that privacy is protected when users are allowed to control how their information is shared, captured, and surveilled. However, system settings are seemingly nonexistent, forcing users to default to the technology’s setup without consideration of user control or informing them of privacy risks.

Their names, ratings, posts, profile pictures, and everything associated with the system is mandatory public knowledge. This violates their right to control their data and its dissemination.

There is little information on how their data is captured and used, with every post or rating automatically being sent publicly by default. The technology is never shown to offer users the opportunity to anonymize themselves, which is a privacy, safety, and identity risk.

ViolatesAuthenticity

System Feature: Posting

“Eliza could not understand the stories it was being told; it did not care about the human beings who confided in it. […]people did not care if their life narratives were really understood. The act of telling them created enough meaning on its own.” — Turkle (2007)

Image Credit: Gemma Kingsley (Motion Graphics Art Director)

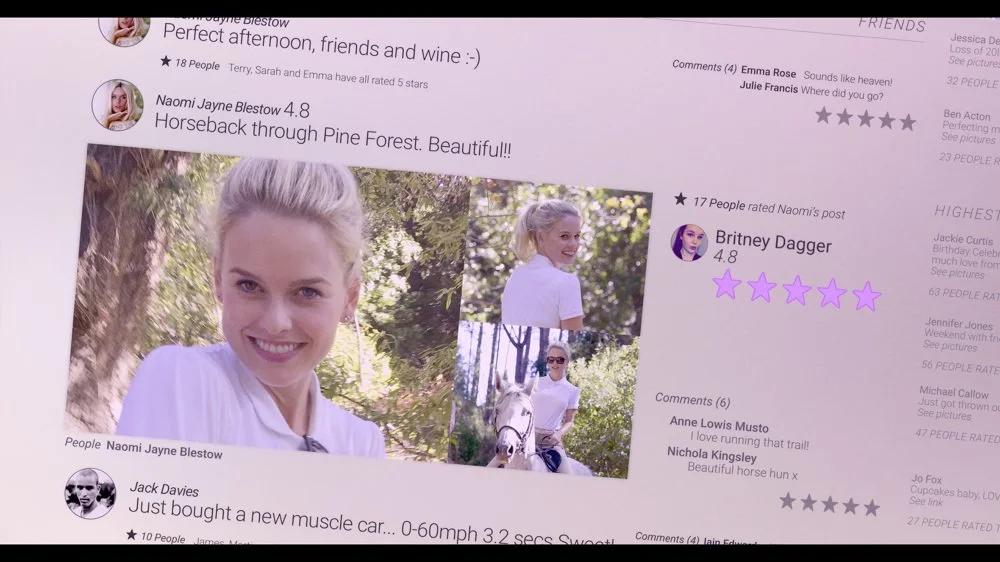

Throughout the episode, there is a clear line between performative authenticity and genuine authenticity—the vehicle for both is the posted content that drives all social interaction. Turkle (2007) discusses how relational artifacts push our “Darwinian buttons,” which trigger a response that simulates “the feeling of a relationship” and potentially incites narcissistic behavior. Interacting with posts through the system simulates the feeling of relationships, but it is nothing more than a parlor trick.

Posts allow people to believe they know one another and have an essential role in each other’s lives. In reality, they know nothing about one another and know their connection is tentative, but it is taboo to acknowledge that inauthenticity.

Posting feeds into the numbers game, validating “High Fours” narcissistic behaviors– barring people from entry, proximity, access, respect, and dignity.

Posting inspires (and rewards) performative authenticity with higher ratings, and thus better socioeconomic status; otherwise, “...people behave as though they no longer value living things and authentic emotion” (Turkle, 2007) and cast them out.

What is the catalyst for Naomi’s wedding invitation (and conversing with Lacie for the first time in years)? A post that gives the illusion they’re old friends with a deep connection to a doll named Mr. Rags. In reality, they have deep animosity and resentments, but the performance of authenticity is a lucrative opportunity for them both. It begs the question: Were they ever truly friends if the basis and maintenance of the relationships rely on the emotion that posts perform? Posts do not care about the stories behind them or the people interacting with them, but posting allows people to create narratives and meaning where there is none.

Image Credit: Netflix

Problem Solving and Decision Making

ViolatesSystem Feature: System AlgorithmIf we were to consider the system’s algorithm, conditional logic and forward chaining would be at the core. Forward chaining begins with available data, then evaluates all possible actions, before performing the best one, and the feedback determines whether the action was good or bad (Proctor & Van Zandt, 2008). Each element of social interaction is considered the initial piece of data (eye contact, attitudes, behaviors, compliments), then evaluated for a rating (one to five stars), and the feedback determines whether the decisions and treatment people receive from the system and its users remain positive or negative.

Conditional reasoning applies if-then logic, such as:

If a user is seen with a sub-three friend, then their rating will decrease.

If the user yells at the airport worker, then they receive double damage.

If the user interacts with high-fours, then their rating will increase.

Ultimately, the algorithm is an oppressive system that uses rule-based conditions to undermine user autonomy, penalize authenticity, reward obsession, and performative behavior while hindering psychological, financial, and social growth. Good UX design is based on sound ethics that support users’ decision-making and well-being, grant users control, consider their welfare, and value authenticity.

References

Friedman, B. & Kahn, P. H. (1992). Human agency and responsible computing: Implications for computer system design. Journal of Systems and Software, 17(1), 7–14.

Friedman, B. & Kahn, P. H. (2003). Human values, ethics, and design. In J. A. Jacko & A. Sears (Eds.), The human-computer interaction handbook (pp. 1177–1201). Mahwah, NJ: Lawrence Erlbaum Associates.

Proctor, R. W. & Van Zandt, T. (2008). Human factors: In simple and complex systems. Boston: Allyn and Bacon. (Chapter 4, 9–11)

Turkle, S. (2007). Authenticity in the age of digital companions. Interaction Studies, 8(3), 501–517.

Social Bias

“Social bias stems from social institutions, practices, and attitudes, occurring when computer systems embody biases that exist independently of, and usually before, software creation.”— Friedman & Kahn (2003)

Image Credit: Netflix

How were new users rated when the technology was first created? We could hypothesize everyone starts at zero and works their way up, but it is more likely that the algorithm leveraged current social biases and social media platform structure (economic status, social status, influencers) to inspire societal dependence.

The upper class became “High Fours” (4.0+ ratings) and gifted perks unavailable to “mid-to-low range” people. “High Fours” are seen as elite, luxurious, put-together, just as the upper class is viewed with the same biases.

The ratings system depends on mid-to-low range people’s desperation to climb the social ranks through increased usage, conformity, and submission to the technology.

Ratings determine the social attitudes and behaviors toward citizens: lower ratings are shunned and avoided, while higher ratings are desired and respected.

At no point does the system discourage social bias or offer users a chance to opt out of displaying, receiving, or sending ratings. The interface relies on pre-existing class disparities and inequalities to determine the worth of its users.